HMI Lab

Overview

The Human-Machine Interaction Laboratory is housed in room 0.37, on the ground floor. It is a small but versatile lab, which can be used as a fixed base simulator for either cars or aircraft, or as the platform for experiments with control tasks or visual perception research. The screens and devices in the lab are controlled by up to 6 computers, linked together in a high-speed Ethernet network. The lab is split up into an observation room and an experiment room, normally the experimenter is working from the observation room, controlling the simulation, and subjects are flying or driving the simulation from the experiment room.

The Human-Machine Interaction Laboratory is housed in room 0.37, on the ground floor. It is a small but versatile lab, which can be used as a fixed base simulator for either cars or aircraft, or as the platform for experiments with control tasks or visual perception research. The screens and devices in the lab are controlled by up to 6 computers, linked together in a high-speed Ethernet network. The lab is split up into an observation room and an experiment room, normally the experimenter is working from the observation room, controlling the simulation, and subjects are flying or driving the simulation from the experiment room.

Equipment

The “aircraft side” of the simulation features:

The “aircraft side” of the simulation features:

- A fully adjustable aircraft seat, from a Breguet Atlantique, installed on the right-hand side.

- A control-loaded hydraulic side stick, with ± 30º excursion in roll and ± 22º excursion in pitch.

- A set of electrically control-loaded rudder pedals (from an actual Fokker F27), with the brake pedals loaded by a passive spring.

- Controls on the pedestal; two throttle levers, flap lever and a speedbrake lever.

- A 3-position gear handle with associated gear lights.

- Two 18″ LDC panels for the instrument displays, one installed in portrait mode, both displays with a 1280 x 1024 pixel resolution.

- A mode control panel for operating a simulated autopilot.

The “car side” of the laboratory features:

The “car side” of the laboratory features:

- A control-loaded steering wheel (FCS) with a NISSAN wheel

- A Control loaded accelerator pedal.

- A passive spring-loaded brake pedal.

- A luxury car seat (NISSAN).

- A 12″ LCD panel for the instrument displays, with an 1024 x 768 pixel resolution.

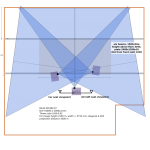

Both sides share a single outside visual projection system. That system is driven off two PCs, with the graphics cards driving a total of 3 DLP projectors with HD resolution. The projectors use a three-sided projection screen, and cover a field of view of over 180º, providing a truly immersive experience. Several options are available for generating the projected image, using OpenSceneGraph, OGRE or FlightGear image generation.

Both sides share a single outside visual projection system. That system is driven off two PCs, with the graphics cards driving a total of 3 DLP projectors with HD resolution. The projectors use a three-sided projection screen, and cover a field of view of over 180º, providing a truly immersive experience. Several options are available for generating the projected image, using OpenSceneGraph, OGRE or FlightGear image generation.

Infrastructure

Both for experiments with car driving and for experiments with flying, it is possible to collect eye tracking data. The lab is driven by a stack of 6 PCs, running Ubuntu Linux with an adapted kernel with the PREEMPT_RT patch, making these systems real-time, with arbitrary precision timing. The communication with various devices in the lab (stick, rudder pedal, gas pedals, gear handle) is handled by an array of EtherCAT IO cards. All PCs are equipped with DUECA, a middleware layer that enables easy implementation of real-time experiment programs. DUECA allows you to develop and tune a simulation on a desktop PC, and then transfer that simulation to the lab, where the different parts (modules) of the simulation can be assigned to the appropriate computers.

Example projects

Different driving simulation, flight simulation and perception oriented projects are possible on the lab. Some directions:

- Understanding lateral steering behaviour, and evaluation of haptic (force on the steering wheel) support systems

- Modelling speed choice in car driving.

- Identification of the haptic admittance and variability of haptic admittance of the neuromuscular system, investigation of the choices people make for admittance under different driving conditions

- Basic experiments on the effect of display format and preview length on manual control

- Identification of neuromuscular admittance when controlling with side sticks

- Basic experiments into perception of stick dynamics properties.

- Flight tasks investigating the role of haptic support systems.