Identifying and modeling how humans use visual and physical motion feedback for control.

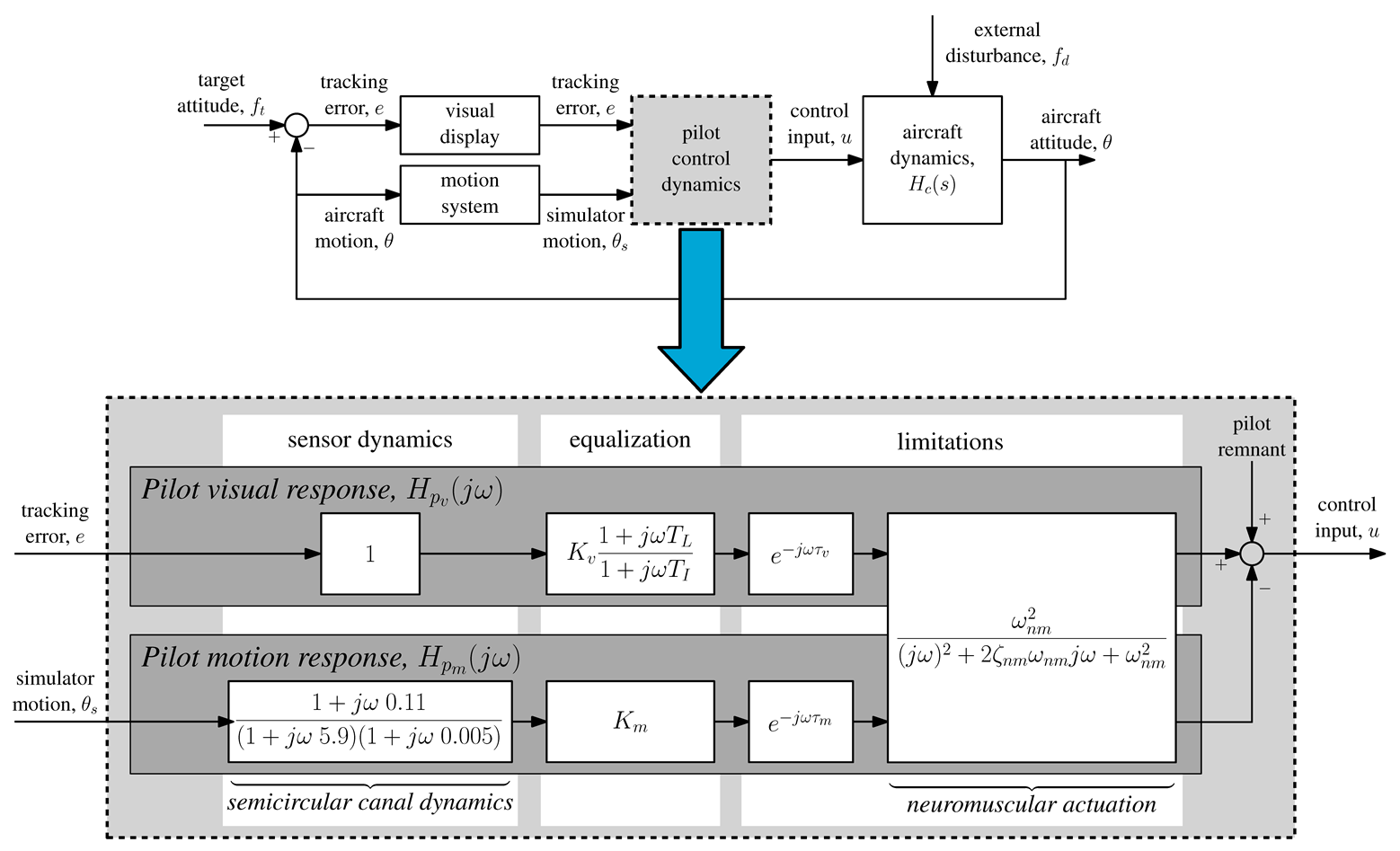

In many realistic manual control tasks, human controllers make use of both various visual as well as physical motion feedback for controlling their vehicles. Understanding how these different feedbacks are integrated and used for control, is essential for, for example, evaluation of the effects of simulator cueing fidelity on pilot control behavior. In this project, we work on developing human operator models with a multiple-input single-output structure that explicitly capture the parallel processing and integration of different feedback signals. An example of such a multi-channel human control model, which includes separate parallel responses based on visual and motion feedback, is shown in the figure below.

The main challenge in the analysis of human multi-channel control behavior is that it is, from a control and modeling perspective, an overdetermined problem. The different control responses human operators use have, in general, very similar dynamical characteristics and interchangeable contribution to the overall control behavior. For example, while the motion feedback response shown above is known to provide human controllers with lead, this lead from motion feedback is not essential for good control and can, with only visual cues, also be obtained from the visual response. In virtually all cases, this causes multi-channel human control models to have an overdetermined model structure, that is, multiple different combinations of model parameters can yield an almost identical model response and a similarly good fit to experiment data. For this reason, obtaining reliable estimates of the parameters of a multi-channel human control model that allow for proper evaluation of the relative contributions of the different control responses, and changes therein, is far from straightforward. Therefore, in this project we also work on system identification and parameter estimation methods that allow for explicit separation of different parallel control responses.

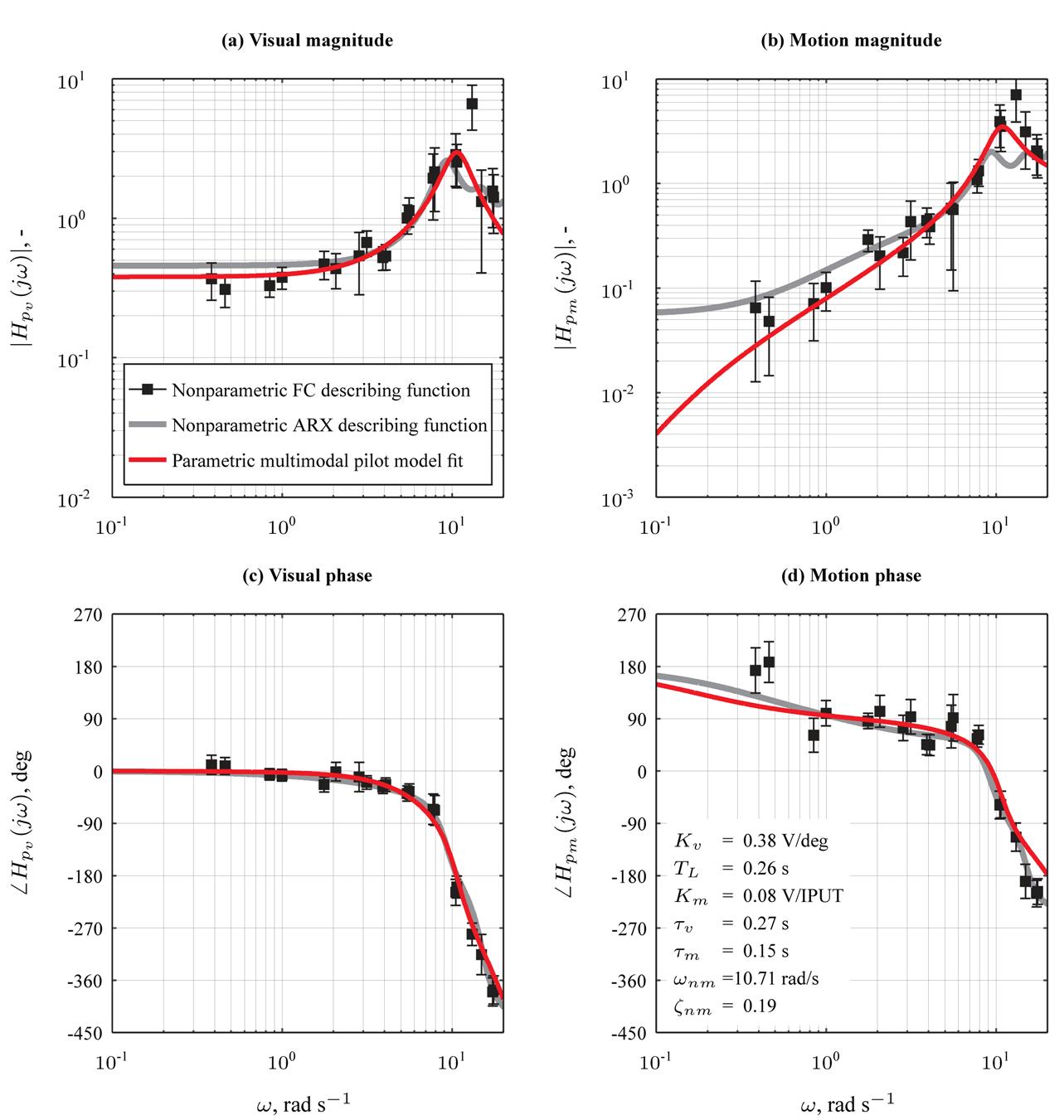

If successful in separating, for example, parallel visual and motion feedback responses, multi-channel human control modeling can provide unique and quantitative insight into realistic human control behavior. With a multi-channel analysis of contorl behavior, we can reveal changes in control behavior that may not be apparent from a traditional (lumped) analysis of human input-output behavior. As shown with the example data below, with dedicated multi-channel system identification methods and multiple forcing function signals, it is possible to fully solve the overdetermined system problem and truly separate two largely interchangeable control responses. Then also the adopted human control behavior can be quantified with a very limited set of physically interpretable multi-channel model parameters, such as human control gains and time delays.